In this work, we present an efficient visual SLAM system designed to tackle both short-term and long-term illumination challenges. Our system adopts a hybrid approach that combines deep learning techniques for feature detection and matching with traditional backend optimization methods. Specifically, we propose a unified CNN that simultaneously extracts keypoints and structural lines. These features are then associated, matched, triangulated, and optimized in a coupled manner. Additionally, we introduce a lightweight relocalization pipeline that reuses the built map, where keypoints, lines, and a structure graph are used to match the query frame with the map. To enhance the applicability of the proposed system to real-world robots, we deploy and accelerate the feature detection and matching networks using C++ and NVIDIA TensorRT. Extensive experiments conducted on various datasets demonstrate that our system outperforms other state-of-the-art visual SLAM systems in illumination-challenging environments. Efficiency evaluations show that our system can run at a rate of 73Hz on a PC and 40Hz on an embedded platform.

System Design

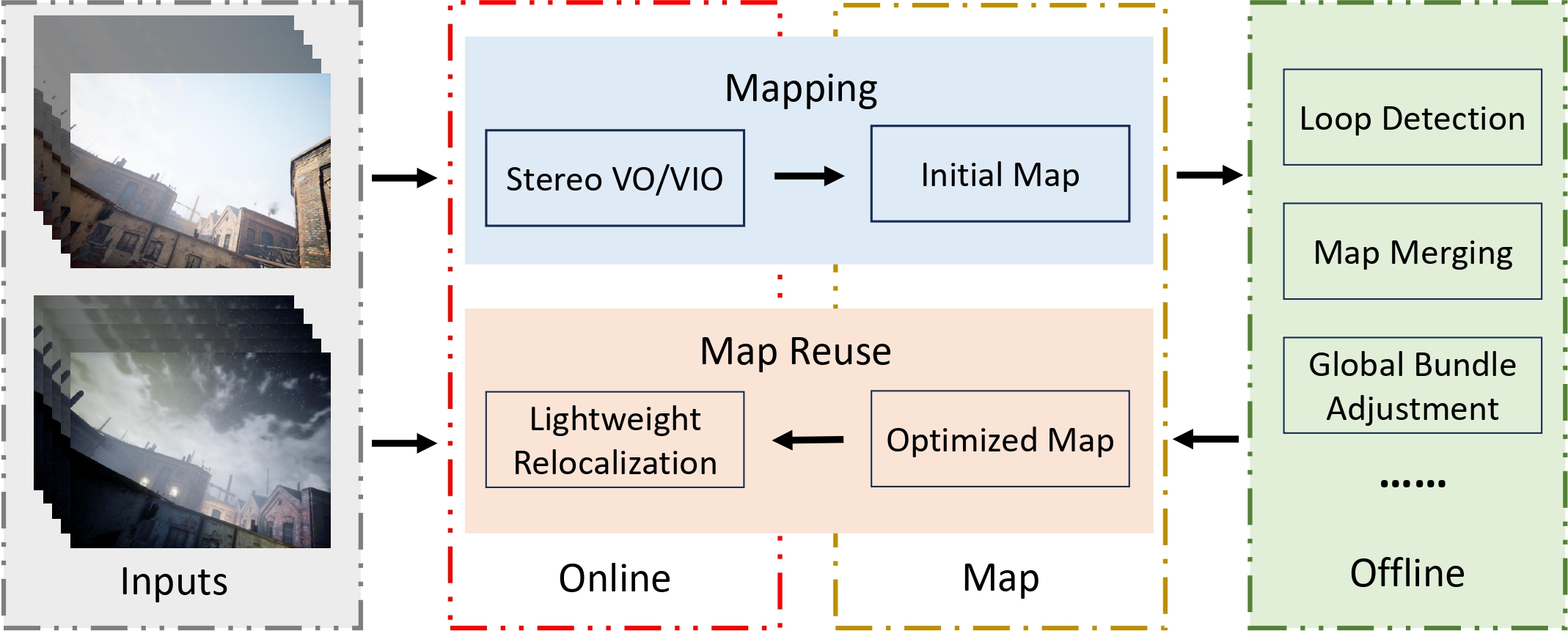

The proposed system is a hybrid system as we need the robustness of data-driven approaches and the accuracy of geometric methods. It consists of three main components: stereo VO/VIO, offline map optimization, and lightweight relocalization. (1) Stereo VO/VIO: We propose a point-line-based visual odometry that can handle both stereo and stereo-inertial inputs. (2) Offline map optimization: We implement several commonly used plugins, such as loop detection, pose graph optimization, and global bundle adjustment. The system is easily extensible for other map-processing purposes by adding customized plugins. (3) Lightweight relocalization: We propose a multi-stage relocalization method to resue the built map. It can be used for lont-term localization using only a monocular camera without the initial guess and feature tracking.

Feature Detection

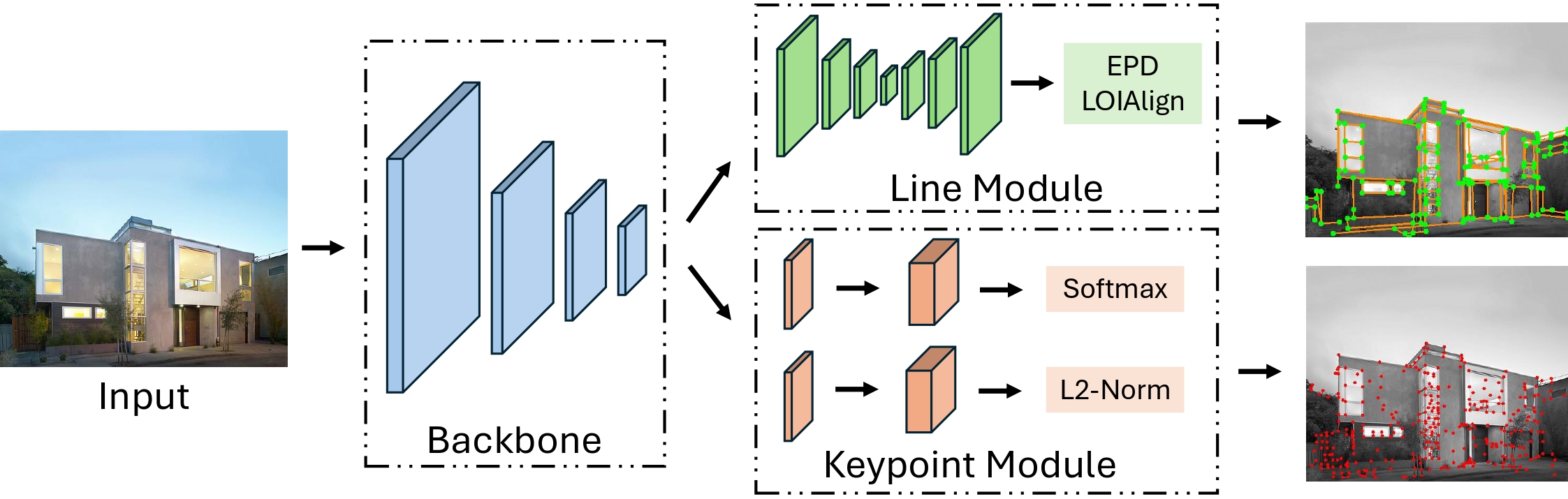

To improve the efficiency of the learning-based feature detection, we propose PLNet, a unified model for both keypoint and line detection. It consists of the shared backbone, the keypoint module, and the line module. PLNet can output keypoints, descriptors, and structural lines simultaneously at a spped of 79.4Hz.

Stereo VO/VIO

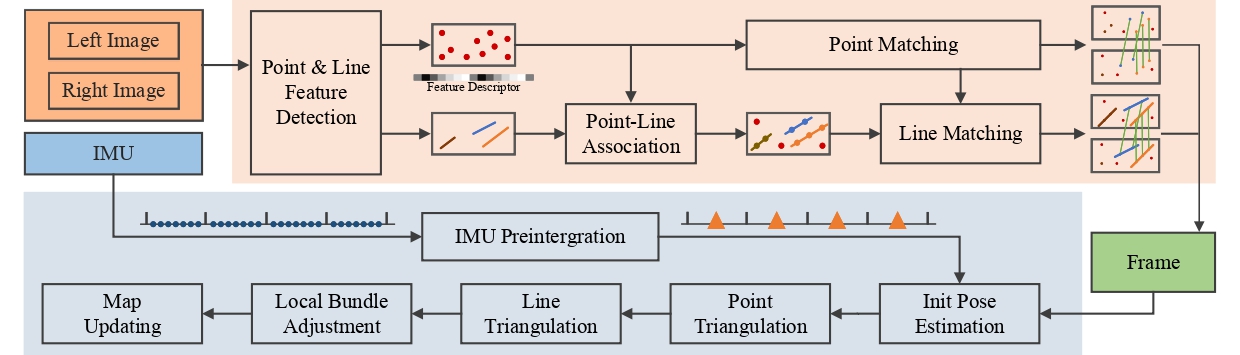

We propose a point-line-based stereo visual odometry to build the iitial map. It is a hybrid system utilizing both the learning-based front-end and the traditional optimization backend. For each stereo image pair, we first employ the proposed PLNet to extract keypoints and line features. Then a GNN (LightGlue) is used to match keypoints. In parallel, we associate line features with keypoints and match them using the keypoint matching results. After that, we perform an initial pose estimation and reject outliers. Based on the results, we triangulate the 2D features of keyframes and insert them into the map. Finally, the local bundle adjustment will be performed to optimize points, lines, and keyframe poses. In the meantime, if an IMU is accessible, its measurements will be processed using the IMU preintegration method, and added to the initial pose estimation and local bundle adjustment.

Video

Publications

-

AirSLAM: An Efficient and Illumination-Robust Point-Line Visual SLAM System.IEEE Transactions on Robotics (T-RO), vol. 41, pp. 1673–1692, 2025.

This work is based on

-

AirVO: An Illumination-Robust Point-Line Visual Odometry.IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 3429–3436, 2023.

AirVO: An Illumination-Robust Point-Line Visual Odometry.IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 3429–3436, 2023.