🙋♀️ Syllabus for Fall 2023 🙌

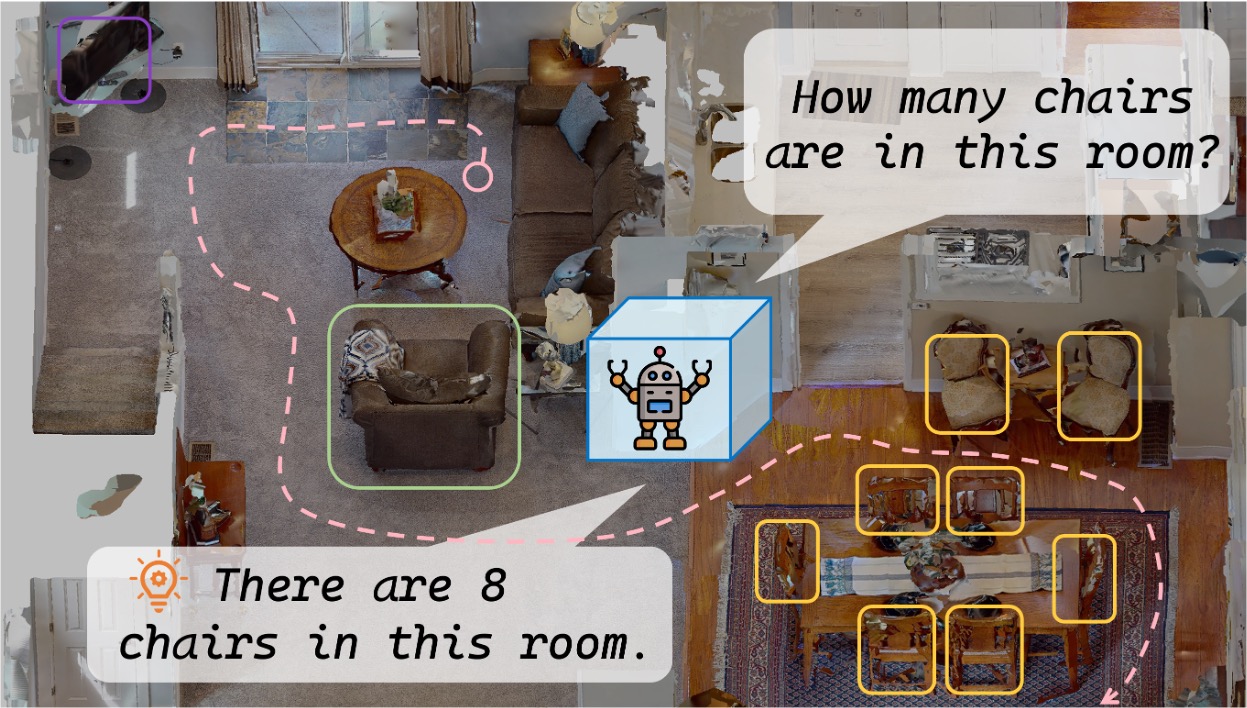

This course gives a systematic introduction to the geometric principles and computational methods of recovering three-dimensional (3D) scene structure and camera motion from multiple, or a sequence of, two-dimensional (2D) images. The first part of the course provides a complete and unified characterization of all fundamental geometric relationships among multiple 2D images of 3D points, lines, planes, symmetric structures, etc., as well as the associated geometric reconstruction algorithms. Complementary to geometry, the second part of the course introduces the recent progress in supervised or unsupervised learning-based methods for detecting and recognizing local features or global geometric structures in 2D images, for robust and accurate 3D reconstruction. Although the principles and methods introduced are fundamental and general, this course emphasizes applications in robotics, augmented reality, and autonomous 3D mapping and navigation. This course is entirely self-contained, necessary background knowledge in linear algebra, rigid-body motions, image formation, and camera models will be covered in the very beginning.

Lecturer

| Name | Title | |

|---|---|---|

|

Assistant Professor |

Prerequisites

- Programming concepts.

- Experience with Python is a must. Classic SLAM systems are built in C++. Learning-based systems are based on PyTorch. This is a graduate seminar in CSE. The expectation is that students are able to write and debug reasonable software (~1000 LoC) in PyTorch.

- Computer Vision.

- The course will assume a prior course in Computer Vision and Image Processing. Prior experience with the use of deep learning for computer vision is a plus.

- Linear Algebra and Probability.

- Background in signal processing, optimization, and machine learning may allow you to better appreciate certain aspects of the course material.

Grades

The course will be graded based on class participation (10%), homework (30%), and course projects (70%). Course projects will be assigned by the instructors. They could be a combination of experimental work, theoretical work, or both. Students will be expected to identify, understand and present research work related to their projects, identify novel ideas extending prior work or applying ideas from a different application to theirs and advance the state-of-the-art in robot perception.

Be creative! Virtually any project given to you has research potential provided the project is well-executed. You will work in a team of two or three students. It will be expected that your final report will document who did what. Your deliverables will be a project report and a short (15-20 min) talk in the last week of class. If you did experimental work, you will also need to submit your code. You will be required to submit a brief (<1 page) project proposal by midterm, and to discuss your ideas with me before that date. Lastly, you will submit a research-paper-style final report before the grading deadline.

Fall 2023 Schedule (Tentative Topics)

Part I: Multiple-view Geometry and Reconstruction Algorithm

-

Representation of a three-dimensional moving scene

-

Image formation and camera calibration

-

Multi-view Geometry

-

Image primitive and correspondence

-

6D Pose Estimation

-

3D Mapping and loop closure detection

Part II: Learning for Recognition and Reconstruction

-

Learning-based feature-detection and matching

-

Structure from motion and visual SLAM

-

End-to-end visual odometry

-

Learning-based indirect visual odometry

-

Learning to detect and match global structures in multiple views

-

Applications in autonomous navigation

Homeworks

-

Project 1 - Structure from motion

-

Project 2 - Feature-based VO + optimization

-

Project 3 - Learning-based VO

Project

- 15 minutes in-class presentation for each project.

- The final report shall be submitted in the format of a conference paper.