This workshop consists of a seminar and a challenge on robot learning and SLAM. We aim to present the latest results on the theory and practice of both traditional and modern techniques for robot learning, robot perception, and SLAM. A series of contributed and invited talks by academic leaders and renowned researchers will discuss ground-breaking perception and mapping methods for long-term autonomy based on current cutting-edge traditional solutions and modern learning methods. The workshop will also discuss the current challenges and future research directions and will include posters and spotlight talks to facilitate interaction between the speakers and the audience. We plan to have a hybrid format with in-person speakers/attendees and a live broadcast to convey the message to a broader audience.

Updates on Oct 06 2023

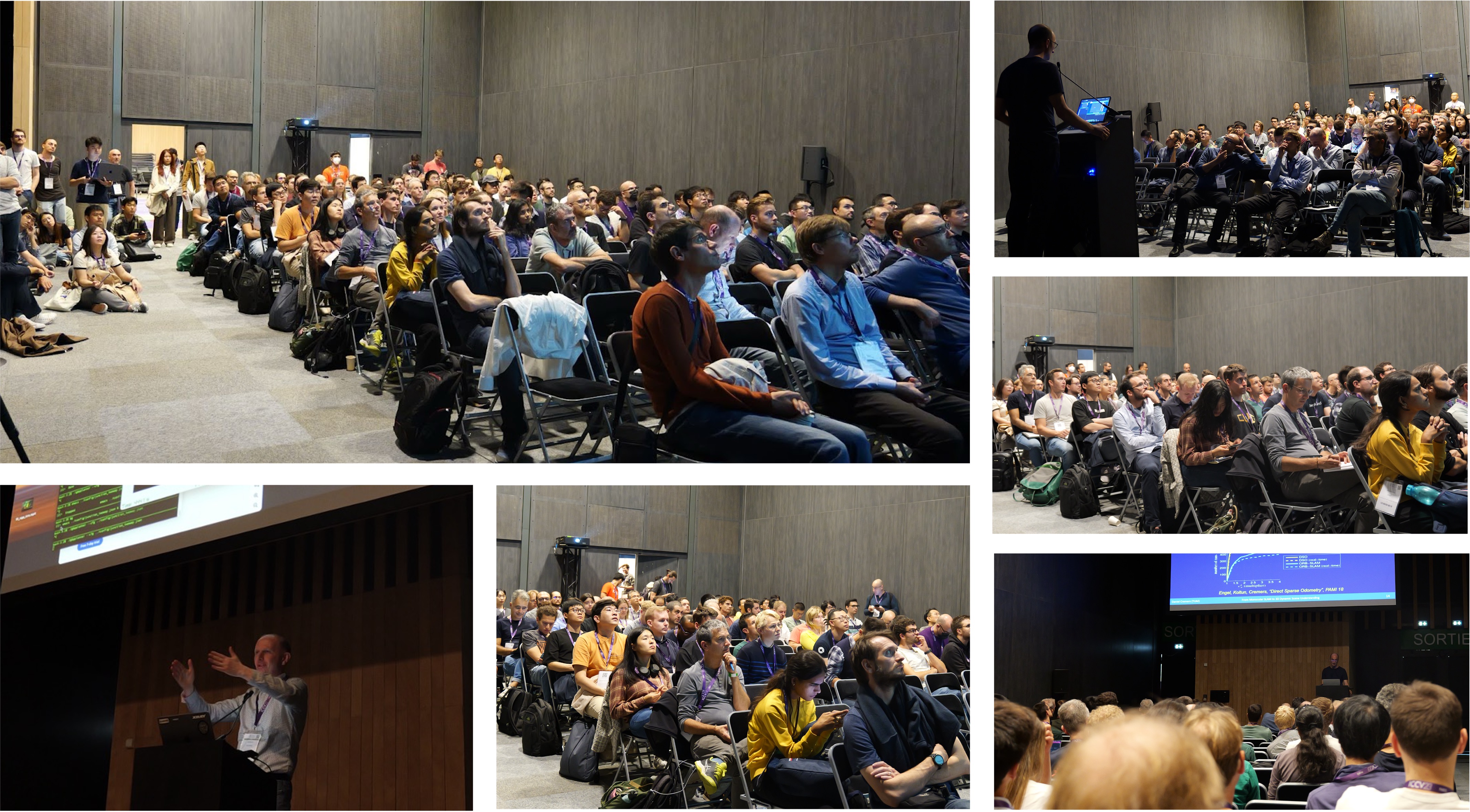

- The workshop was successfully held with the number of participants surpassing the meeting room’s capacity!

- Additionally, over 3K viewers tuned in on YouTube in just three days!

Seminar

Session 1 (9:00-10:45 AM)

| Presenter | Session Title & Slides Link | Time | YouTube Link | |

|---|---|---|---|---|

| Welcome message by organizers & overview of workshop |

9:00 - 9:10 AM |

|

||

|

PhD Candidate Project Scientist, Robotics Institute Carnegie Mellon University |

SLAM Challenge Summary |

9:10 - 9:35 AM |

|

|

Associate Professor University of Oxford |

Robust Multi Sensor SLAM with Learning and Sensor Fusion |

9:35 - 9:55 AM |

|

|

Associate Professor Massachusetts Institute of Technology |

From SLAM to Spatial Perception: Hierarchical Models, Certification, and Self-Supervision |

10:00 - 10:20 AM |

|

|

Systems Scientist Carnegie Mellon University |

From Lidar SLAM to Full-scale Autonomy and Beyond |

10:25 - 10:45 AM |

|

Coffee Break and Posters (10:45 - 11:00 AM)

Session 2 (11:00 AM - 12:35 PM)

| Presenter | Session Title & Slides Link | Time | YouTube Link | |

|---|---|---|---|---|

|

Full Professor ETH Zurich |

Visual Localization and Mapping: From Classical to Modern |

11:00 - 11:20 AM |

|

|

Assistant Professor in Department of Computer Science and Engineering University at Buffalo, SUNY |

Imperative SLAM and PyPose Library for Robot Learning |

11:25 - 11:45 AM |

|

|

Professor of Robot Vision Imperial College London |

Distributed Estimation and Learning for Robotics |

11:50 AM - 12:10 PM |

|

|

Professor of Informatics and Mathematics Technical University of Munich |

From Monocular SLAM to 3D Dynamic Scene Understanding |

12:15 - 12:35 PM |

|

Lunch Break and Posters (12:35 - 1:30 PM)

Session 3 (1:30 - 3:40 PM)

| Presenter | Session Title & Slides Link | Time | YouTube Link | |

|---|---|---|---|---|

|

Professor University of Toronto |

Learning Perception Components for Long-Term Path Following |

1:30 - 1:50 PM |

|

|

Assistant Professor, Robotics Institute Carnegie Mellon University |

Probabilistic Pose Prediction |

1:55 - 2:15 PM |

|

|

Assistant Professor King's College London |

Advancing the Role of SLAM-Based Active Mapping in Wearable Robotics |

2:20 - 2:40 PM |

|

|

Associate Professor Seoul National University |

Advancing SLAM with Learning: Integrating High-Level Scene Understanding with Non-conventional Sensors |

2:45 - 3:05 PM |

|

|

Associate Professor in Robotics Institute Carnegie Mellon University |

Learning for Sonar and Radar SLAM |

3:10 - 3:30 PM |

|

| Concluding Remarks |

3:30 - 3:40 PM |

|

||

Challenge

Pushing SLAM Towards All-weather Environments

Challenge is Live now! Please visit the main page join our three tracks of challenges from the links below

Please note deadline for submissions: 25th September 2023 11:59 PM EST

Robust odometry system is an indispensable need of autonomous robots operating navigation, exploration, and locomotion in unknown environments. In recent years, various robots are being deployed in increasingly complex environments for a broad spectrum of applications such as off-road driving, search-and-rescue in extreme environments, and robotic rovers on planetary missions. Despite the progress made, most of state estimation algorithms are still vulnerable in long-term operation and still struggle in these scenarios. A key necessity in progressing SLAM for long-term autonomy is the availability of high-quality datasets including various challenging scenerios.

To push the limits of robust SLAM and robust perception, we will organize a SLAM challenge and evaluate the performance from virtual to real world robotics. For virtual environments,

Multi Degradation: The dataset contains a broad set of perceptually degraded environments such as darkness, airbone obscurats conditions such as fog, dust, smoke and lack of prominent perceptual features in self-similar areas (Figure2).

- Multi Robots: The dataset is collected by various heterogeneous robots including aerial, wheeled and legged robots over multiple seasons. Most importantly, our dataset also provide the extrinsic and communication signal between robots which allows the map could be merged in the single world frame. These features are very important for resarcher to study multi agent SLAM.

- Multi Spectral: The dataset also contains different modalities not only visual, LiDAR, and inertial sensors but also the thermal data which is beyond the human eye.

- Multi Motion: The existing popular datasets such as KITTI and Cityscapes only covers very limited motion patterns, which are mostly moving straight forward plus small left or right turns. This regular motion is too simple to sufficiently test a visual SLAM algorithm. Our dataset covers much more diverse motion combinations in 3D space, which is significantly more difficult than existing datasets.

- Multi Dynamic: Our dataset contains dynamic objects including human, vehicles, dust and snow.

- Friendly to learning methods: Our dataset not only provide the benchmark for traditional method but also will provide the benchmark for learning based methods.

The dataset can be used for a number of visual tasks, including optical flow, visual odometry, lidar odometry, thermal odometry and multi agent odometry. Preliminary experiments show that methods performing well on established benchmarks such as KITTI does not achieve satisfactory results on SubT-MRS dataset. In this competition, we will focus on robustness and efficiency. We will provide an evaluation metric (same with the KITTI dataset) and evaluation website for comparison and submission.

The workshop has an associated new benchmark dataset (Subt-MRS datasets and TartanAir V2 datasets) that we will publish three months before the workshop.

Workshop Registration

Workshop Organizers & Committee

Assistant Professor, Spatial AI & Robotics Lab University at Buffalo, SUNY |

PhD Candidate Carnegie Mellon University |

Project Scientist, Robotics Institute Carnegie Mellon University |

PhD Candidate Carnegie Mellon University |

Postdoctoral Research Fellow, Biorobotics Lab Harvard University |

Research Scientist US Army Research Laboratory |

Research Associate Professor, Robotics Institute Carnegie Mellon University |

Robust Perception and Mobile Robotics Lab Seoul National University |

Senior researcher, Autonomous Systems Research Team Microsoft |

Ph.D. student, Spatial AI & Robotics Lab University at Buffalo, SUNY |

Ph.D. student, Spatial AI & Robotics Lab University at Buffalo, SUNY |